Across much of the developed world, governments are translating social contracts into code. The UK’s digital ID rollout is simply one of the first to garner serious attention.

The UK government presents its digital identity initiative as simple, even practical. Secure. Voluntary. Privacy-preserving. A tool for proving who you are, without standing in line or waiting for forms in the mail. A step toward modernization.

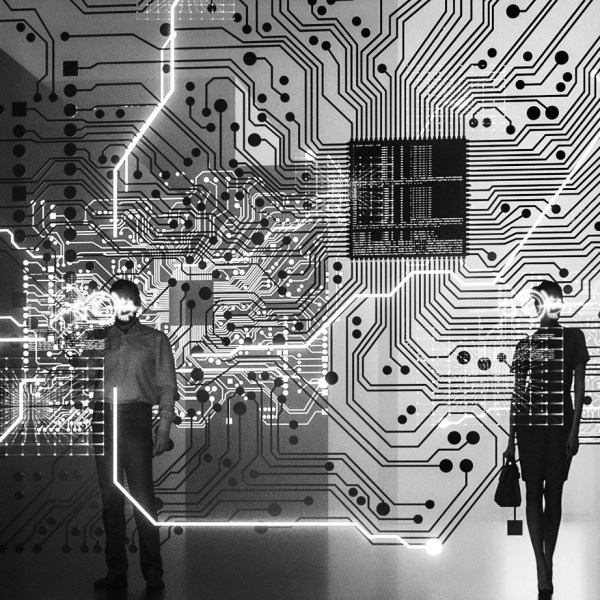

The Digital ID isn’t being built in isolation. It’s emerging from a web of institutions that have long studied human systems — economic, social, and behavioral — and are now embedding those lessons into code.

Understanding these connections matters. The same expertise used to map, predict, and influence consumer behavior is now being applied to national digital identity infrastructure.

The Tech They Sell to You

Officially, the UK’s digital ID system operates through the Digital Verification Services (DVS) Trust Framework, to be embedded in law by the Data (Use & Access) Bill. It is designed to be interoperable and user-centric, allowing citizens to authenticate identity digitally across multiple services (banks, government, utilities) without repeatedly sharing documents.

The government’s communications emphasize privacy and choice. Each user controls their ID, and only necessary data is shared with service providers. Biometric data, at least at launch, is limited: photos of faces, not fingerprints or behavioral tracking. On paper, it is a modest system. Yet the technical scaffolding is built for expansion. Standards, APIs, and privacy frameworks could, in principle, accommodate far more data points than are currently being collected.

Behind the government’s polished language of security and efficiency sit institutions shaping not just how data is handled, but how people are expected to interact with systems of trust.

This is where the architects of the system come in, and where my warning begins for those in the UK.

Open Data Institute: Shaping the Rules

The ODI, headquartered in London, submitted formal written evidence to Parliament during the passage of the Data (Use & Access) Bill. Their recommendations were technical but far-reaching:

“We recommend a new clause tasking the Secretary of State, in consultation with relevant standards bodies (W3C, ISO, IEEE) to develop an automated privacy framework.” (bills.parliament.uk)

“Citizens should be able to use the holder in the Gamma framework to pull in data to reshare at their discretion.” (bills.parliament.uk)

These statements reflect a focus on interoperable, citizen-centered control. But they also establish technical flexibility: the very framework that allows citizens to pull in and share data could, in future iterations, include behavioral or biometric elements. The ODI does not advocate for this explicitly; it is the architecture that permits it.

If the ODI, in any way, shapes the system’s technical skeleton, the Tavistock Institute shapes its behavioral soul: the psychology of adoption and normalization.

Tavistock Institute: Historical Continuity in Behavioral Systems

The Tavistock Foundation, listed as a partner in the WEF’s Future of Personalized Well-being, has a far longer history applicable to the Digital ID.

Founded in 1947, Tavistock grew from the Tavistock Clinic, which conducted wartime studies in morale, psychological resilience and propaganda, into an institute focused on social systems, organizational psychology, and behavioral adaptation — fields that, while individually benign, form a powerful mix when aligned with technology and governance. Governments, NATO, and corporations have sought their expertise for decades.

Their involvement in the WEF initiative is framed in terms of improving health outcomes:

“The Future of Personalized Well-being initiative aims to harness the power of digital biology to be the game-changer needed to improve people’s quality of life.” (World Economic Forum)

It is easy to dismiss this as abstract or aspirational. Yet when behavioral expertise historically applied to wartime and organizational contexts now intersects with digital identity systems, the implications for user adoption, normalization of surveillance, and behavioral nudges are difficult to ignore. Tavistock is not writing laws, but their work informs the design and reception of systems that shape how people interact with their own data.

The World Economic Forum’s Reimagining Digital ID paper explicitly notes:

“These communications campaigns should explain the link between privacy and digital ID and seek to counter misinformation and conspiracy theories related to digital ID.”

Given Tavistock’s expertise in behavioral systems and its partnerships in related initiatives, it is reasonable to surmise that institutions like it may play a central role in shaping public perception and acceptance of digital IDs. The task is not merely informational, it is a subtle orchestration of narrative and trust, guiding citizens toward voluntary submission within the framework being constructed.

Precision Consumer 2030: The Bridge Between Digital IDs and Behavior

Co-developed by the WEF, IBM, 23andMe, and over 20 other partners, Precision Consumer 2030 envisions personalized data streams—biosensors, wearables, AI predictions—that guide individual behavior. The report emphasizes the potential benefits: preventive interventions, better individual health outcomes, more efficient services.

“Advancements in technology and the use of personal biodata hold the promise to move beyond mass ‘one size fits all’ solutions to more personalized solutions that preventatively improve well-being…New business, operating, and governance models are needed to realize the benefits of personalized well-being solutions at scale” (World Economic Forum)

The conceptual overlap with digital identity is clear. Digital IDs create a legally recognized digital anchor for a person, while Precision Consumer 2030 envisions systems that measure, predict, and guide the same person’s behavior. The same principles—data collection, interoperability, user-centric design—apply in both contexts. The difference is in scale and integration: one is domestic, one is global; one is currently modest, one is aspirational.

The Implications

Taken together, these strands suggest the UK’s digital ID rollout is more than convenience. It is a platform designed with foresight into behavior, interoperability, and potential expansion. Citizens will control their data, but the architecture will allow for more data types, more services, and greater influence over the parameters of individual participation in society. As Prime Minister Keir Starmer stated plainly:

“You will not be able to work in the United Kingdom if you do not have digital ID.” (2025 Global Progress Action Summit, in London)

There’s no reason to believe that statement is the end of the plan, nor the limit of its scope. Whether meant literally or rhetorically, the remark reflects a policy direction where participation in the economy becomes increasingly contingent on digital verification.

This is not speculation. The evidence is public, in the written submissions, the WEF partnerships, and the report itself. What is less public is the extent to which future iterations could integrate behavioral and biometric elements, or the subtle ways adoption can be encouraged through system design.

The lesson is not fear, but awareness: the architecture exists, the actors are connected, and the system is being built in plain sight. How it will be used, and how it will evolve, is a question that rests partly with the public—and partly with the invisible hands that designed the foundations.

Questions to Carry Forward

- How will the UK define the limits of digital ID data collection as new biometric and behavioral data types become feasible?

- What transparency exists in the influence of organizations like ODI and Tavistock on policy and system design?

- If the Precision Consumer 2030 framework informs system design, how far might predictive and adaptive digital services extend into everyday life?

The government’s framing is simple: convenience, security, privacy. The systems’ potential is far more complex.

Complex enough that civil-liberty groups such as Big Brother Watch, Liberty, and the Open Rights Group, along with campaigns like NO2ID and StopBritCard, have already warned that digital identity schemes risk the creation of a checkpoint society — one where access to work, housing, and even participation in public life may hinge on a single government-issued credential. Their objections aren’t noise from the fringe; they touch on cybersecurity fragility, digital exclusion, and the quiet expansion of state reach under the banner of modernization. Even parties outside Westminster’s mainstream, like Plaid Cymru, have raised these same alarms. That alignment — activists, technologists, and politicians speaking the same cautionary language — suggests this isn’t paranoia but recognition of an emerging pattern.

For those following the threads of emerging governance and digital influence, this discussion of the UK’s digital ID is only part of the story. The systems being built today draw on principles first outlined in Precision Consumer 2030, where personal data, predictive analytics, and behavioral insight converge under the guise of well-being. To see how these frameworks were imagined—and how they quietly shape the infrastructure now being deployed—read my earlier exploration, Precision Consumer 2030: Wellness as a Window into You, republished by OffGuardian.org. Understanding the blueprint is the first step toward recognizing the structure being quietly built around us.

© 2025 Zakariyas James. First shared here at theruminationcompilation.wordpress.com.